Member-only story

GenAI model evaluation metric — ROUGE

In supervised learning, we use R-squared, ROC, Precision-call, or F-sore to evaluate performance during model training. How is a Large Language Model evaluated? Large Language Models are Transformer-based models built on complex neural networks and fundamentally follow a supervised learning framework. They still apply the typical train-test-validation data split. The language datasets that they were trained on still have fields to be used as the input and output fields for the neural networks.

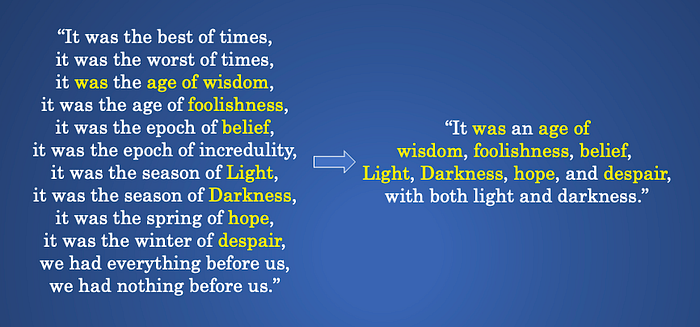

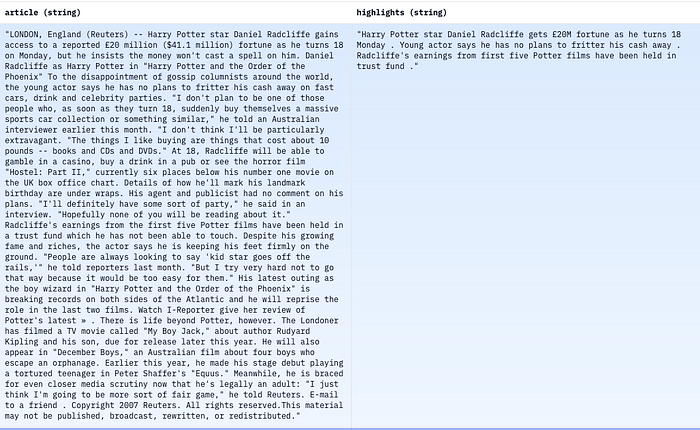

LLMs can perform text summarization. They paraphrase or re-write a long article into a short summary. This sounds very “unsupervised”, right? In fact, the training process is still the supervised-learning framework. They were trained on records with the ‘article’ as the input field, and the ‘summary’ as the output field. Below is a well-known language dataset called “CNN/DailyMail” that many LLMs were trained or fined-tuned with. It has 300k unique news articles by journalists at CNN and the Daily Mail. It has the ‘article’ and the summarization field called ‘highlights’. Once an LLM is trained and ready to be tested on the validation data, it loads the ‘article’ field as the input, then produces a summarized version as the ‘prediction’. The prediction will be compared with the ground truth, which is the ‘highlights’ field, to evaluate the model performance.

Knowing how it works, this post wants to explain the metrics that evaluate the performance of an LLM in text summarization. The most cited metrics are the ROUGE metrics. It is a group of metrics including ROUGE-1, ROUGE-2, ROUGE-L, etc. In this post, I will walk you through how they work, the range of the performances of LLMs in text summarization, and clear up a few confusions that readers may have. I’ll use the ROUGE library to explain how they calculate. After reading this article, you will gain a much better understanding of the strengths of the evaluation metrics. The code is made available in this Jupyter notebook.

Let’s start with something very intuitive. If we want to assess the summarization quality, we can count the overlapping words in both the original article and the summarized…